The Art of Choosing a Programming Language

2018-03-18

Programmers, like professionals in other fields, are passionate about their tools. One of the main elements in the toolbox of coders are programming languages. They allow their users to express solutions through code to tackle a large variety of problems in many domains.

Programming is also an art, as described in the article by Donald Knuth titled Computer Programming as an Art and in certain aspects of programming languages can be seen as art styles.

As can be expected with many things that people are passionate about, whether viewed as a tool or an art style, coders can bond or argue about programming languages. Like philosophers of old, these discussions can go quite into depth, but to the outsider the arguments made or the sentiments behind them can be quite opaque.

If programming languages existed back then, I am sure they would be a hotly argued topic. School of Athens by Raphael

Here I hope to shed some light to the casual observer on what makes programmers passionate about these languages and why some prefer one over the other. Such analysis can be quite subjective, and very much dependent on the writers experiences and preferences, but I will try my best to give an impartial overview.

In theory many general purpose programming languages are capable of doing the same things. The most commonly used programming languages are Turing complete, meaning that they can all simulate the workings of any Turing machine. Without getting into the full description of what a Turing machine is, for the reader unfamiliar with the concept, this means that any of the languages can express programs for similar tasks.

There are thousands of programming languages. Some older, and going back to the 50s, 60s and 70s and with considerable use still. Others have been released as recently as the last 10 years, and have gained considerable following. Given as I have mentioned that theoretically all these languages can do the same things, one could wonder why new languages are designed.

History

Historically, the original computers were instructed by a pure machine language, for example 0s and 1s. Writing programs this way can be tedious and error prone, and the results code can be very difficult to read. This is one of reasons why assembly languages were created. These are languages that are still very much tied into the instruction set of a particular machine, but in a more human readable form, where symbolic names are given for machine instructions. These would be then translated to the pure machine language, to instruct the machine.

While reading and writing programs becomes easier this way, using assembly languages still has disadvantages. First, these languages are still very much tied to the hardware. Different instruction architectures can mean that a program for the same goal would have to be written differently for each architecture. Second, for many the instructions that one has to write this way are still very low level. The argument is made that with a better set of abstractions over assembly, programs can be written in a better way. A program written with such abstractions could be translated, compiled, to the required machine code specific for the required architecture.

The question of which abstractions need to be utilized is at the heart of why there are so many different programming languages. People have different ideas on what these abstractions might be, what the benefits and drawbacks of applying them are. This is at the heart of why people design and use newer programming languages. In the following sections we go through some of the aspects on these abstractions.

Much like with ancients wonders of next to a modern city, with programming languages old also gives rise to the new, and often co-exists with it. The Giza Pyramids © Robbster1983

Paradigms and Style

As mentioned before, there are different opinions on how programs could be constructed. There are various subjects about on which people have opinions about: how the code is organized and how it is executed, among other elements. This is very much similar to how art styles function. For example the same subject can be painted in two differing styles.

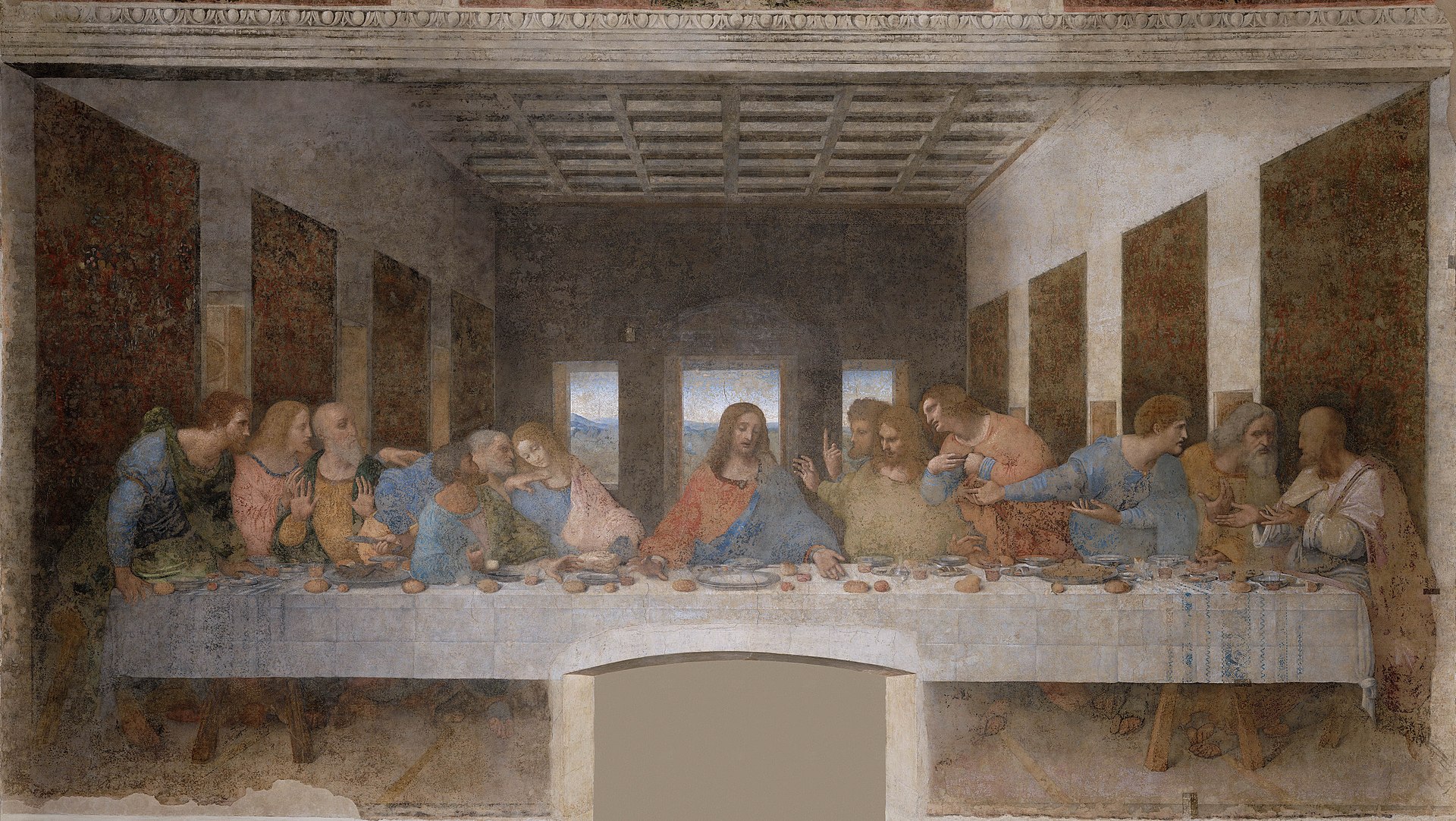

The Last Supper (Leonardo da Vinci) one of the most famous Renaissance style paintings.

The Last Supper (Tintoretto) depicts the same subject but in a Mannerist, proto-Baroque style.

Programming languages can be be classified on the different styles, programming paradigms based on the common elements in the approaches. Some paradigms include:

Imperative

Imperative code can be seen as a set of commands for the computer to perform. This type of paradigm matches very strongly with how computer hardware is working, as nearly all computer hardware is designed the execute machine language, which is in itself is written in imperative style.

Procedural

One of the ways one can structure a program is to group together a series of commands. These groups, procedures, can then be called, used or reused as a single entity.

Object-Oriented

Object oriented code uses the notion of objects to organize code. An object is an encapsulation of related state and behavior. For example, consider a software that needs to represent a vehicle. The elements of the state that describe the object, such as colour and make, are called attributes. Various functionality related to the object, such as calculating the price of the car, are called methods. These concepts allow reuse, as the objects for a car and a motorcycle can share functionality.

Declarative

In declarative programming, one describes, or more aptly declares what the problem is as opposed to detailing the steps on how to solve it. This contrasts with imperative programming, where one gives the instructions on how to solve it directly.

Functional

Functional programming is one form of declarative programming where programs are constructed using functions, which are analogous and inspired by to mathematical functions. The intention is that these functions are ideally side effect free: their output is dependent solely on their input. This can make code easier to understand and allows for easier use of code written this way.

Logic

The logic paradigm is based around expressing code as a set of logical axioms. These axioms can then be used as a from of knowledge base to derive new knowledge and query. The programs themselves then can be posed as a query in this system. For example, if the knowledge is defined with the axioms "Tweety is a bird" and "Birds are animals", the system should be able to answer the queries: "Is Tweety and animal?".

A language can focus on supporting a particular paradigm heavily or have a strong preference for it. For example Haskell or Clojure lean quite heavily on the functional paradigm, while Prolog is one of the main logic programming languages. Others, provide an explicit merge of various methodologies, such as Scala that combines elements of object orientation and functional programming.

Preference for a particular language can go beyond the programming paradigms used. Syntax, the structure of how code is written, can matter quite a bit for person's view on a particular language. For example Python uses indentation for managing the control flow of the code, as opposed to symbols in other languages.

Such preference can go even beyond the actual code itself to the tools one uses to write. While any text editor for editing text can often suffice, people can have differing expectations with regards to integrated development environments (IDEs) or other tools to edit and analyze the code. The lack or existence of specific tooling can also be a factor when deciding between languages.

Available Code and Libraries

Most coding is done with a particular purpose in mind, and it is rarely the case that the programmer can build everything from the ground up for such a task. In order to build interesting programs, one has to utilize existing knowledge, much like someone would utilize knowledge in a library to come to new insights.

The Bibliotheca Alexandrina. Photo © Carsten Whimster licensed under CC BY 3.0 https://creativecommons.org/licenses/by/3.0/

Existing code can be used as a foundation from which the program can be built. Roughly speaking existing code comes in three main forms. It is either being part of the language (often called the standard library of the language), some external libraries extending the language for a particular purpose, or an existing code base of the application that one can improve upon.

The standard library contains various functionality included with the language itself. For example ways of manipulating files, various connection protocols, support for certain file formats, etc. Of course it is very much helpful if particular support for a certain feature that aims to use is already available with the language itself. This means less code to write and connect. On the other hand there is also some tension with regards to including too many features in the standard library, especially if certain parts of it become outdated, which enlarges the language and makes it more unwieldy.

The external libraries that one can use in a language can also influence the choice of a language. Certain languages have a lot of library support for specific tasks. For example Python has a large and active following in the Data Science community. Other languages have a lot of support for many different tasks simply due their age and user base such as Java. By using libraries one does not need to implement certain features from scratch but can reuse existing work and focus on their specific problem at hand.

Finally, not all development starts from scratch, often one has to make additions or improvement to an existing program, in which case the choice of the language has already been made. While a rewrite of the code can often be tempting, linking between two code programming languages is not always trivial. It is often a good idea to continue with an existing language.

There are some exceptions to this as some languages have been designed with the ground up to inter-operate with other languages. A good example of this is Clojure has great interop with Java and/or JavaScript. This allows it to leverage existing libraries already written, and makes it much more attractive to use.

Existing Knowledge

Writing code is rarely trivial, and neither is learning new programming languages. Although previous experience helps, especially when dealing with languages with known paradigms, due to slight or large differences it can take a while to get used to the new language and libraries. With constantly looming deadlines and pressure to deliver, it can make sense to minimize the work that needs to be done. It is perfectly valid to work with a language that one already knows.

Curiosity

On the other hand learning a new language, especially in a new paradigm or other innovative features, can be quite interesting. It not only allows for work on existing code written in the new language but it also gives insights in how to program which is beneficial as a programmer in general no matter what language he is using.

Speed

As mentioned earlier, commonly used programming languages are abstractions over machine code that can do more of less the same thing computationally. What abstractions are used however can influence the speed of executing the program, as well as the time of translating the code in the programming language to machine code.

A common abstraction that can influence the speed of executing the program is how memory is managed. During the running of a program certain information needs to be stored. A way to do this is to allocate space in the computers memory, keep it around while needed and remove it afterwards. This latter portion, can be quite difficult to manage manually, as if one does it prematurely the program might crash or have other bugs. Not removing it would fill the memory with garbage, which makes the program use up more and more memory till it crashes.

A solution to these problems is automatic garbage collection: a way for the computer to automatically manage and clean up memory. While this is a good solution in many cases, this process comes with an overhead, and can be unpredictable when the time and resource consuming cleanup happens. In most cases this overhead is trivial to pay for eliminating a whole suite of potential bugs. However in certain scenarios, such as real-time high performance games, it could be too much to pay.

The other issue of speed, translating the code from the programming language to machine code, can also be a consideration. Development requires making changes to code and checking whether the changes work. If the process of getting feedback takes a long time, due to these translations, it can destroy a programmers productivity. Golang is a language that is explicitly designed for fast compilation.

Safety

Safety is in many cases the flip side to the speed argument. Certain abstractions cost you in speed but provide you with safety in return. Different languages tend to make different trade-offs with this regard. For example one of the relatively new languages, Rust aims at focus on zero cost abstractions: abstractions with little to no run-time performance penalty.

One contentious aspect of safety is the use of type systems. Types allow the coder to specify various categories, such as numbers, persons, cars, etc as well as their requirements to be fulfilled within the context of the program. Types can be checked both statically, before the system is run, or dynamically, during the running of the program. Some people swear by very expressive type systems: where types can specify very detailed features of the things the program wants to represent. This then can be used for checking code for correctness, both before and during the running of a program, as well as documentation. On the other hand type checking is not free: it can make translating the compilation into machine code a much slower process. Some people also consider the writing and checking of types themselves very cumbersome during initial development, where quick iteration can be slowed down by specifying detailed types.

There is a whole spectrum of possible stances with regards to type systems. For example, certain languages such as Haskell and Idris are designed from the ground up with very expressive type systems that are statically checked. Others, for example Dart which started off as having optional types but adds mandatory types in the latest iteration to help with tooling, take a more balanced approach. Golang explicitly has a static, but minimalist, type system that allows for fast compilation. There are also languages, such as Clojure that instead of static types, use contract systems to ensure safety at run-time and allow for documentation and testing.

Deployment

While most general purpose programming languages can be made to run in all environments, they are not always available. In certain environments, such as mobile or on the web, only specific languages are supported. For example on Android Java and Kotlin are officially supported, while on the web JavaScript is the current Lingua Franca of the web. This means that it can be quite a herculean effort to make other languages work in such environments, and going with the most supported option is easier.

The way certain languages can get around on this hindrance is by using the more commonly supported language as the target to translate into. For example ClojureScript compiles into JavaScript. And in some cases, other developers have made the effort to get frameworks up and running that allow the use of a different language, such as the use of React Native and Flutter that allow the use of JavaScript and Dart respectively to develop mobile applications.

The Team and Beyond

One final aspect of choosing a programming language, which can be surprisingly significant, is which language is beneficial to the team, as opposed to an individual developer. Different teams bring different expertise to the table, and while most professionals are often quite willing and able to use a new language if it is most suited to the task at hand, this can still be a cost that might be better spent on developing the application. From an employers perspective it can also often be beneficial to stick to more commonly used languages as it can be easier to find future employees versed in the language used. On the other hand, there are many professionals that would be quite willing to jump on the chance of using the latest programming languages, in which case the choice for a newer or more niche language can be a competitive advantage from a recruiting perspective.

Conclusion

I hope this article gave some insight on why programmers pick and argue about programming languages. Despite all the various differences and arguments it is also very important to note, that great software has been written in many different languages, that is both excellent code and solves important problems. And while picking the right tool for the job is an important, it can be just an aspect of the art of solving problems with code.