Dunes

2022-09-18

Stable Diffusion is one of the latest models that is capable of translating a piece of text, such as "arid, desert dunes" into great looking images. Unlike some other tools and models, Stable Diffusion can be installed and run locally on a desktop machine (see this repo and instructions).

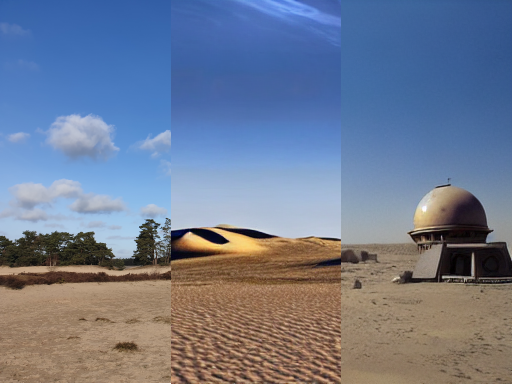

One of the great features of the Stable Diffusion model is the ability to combine a text input with a pre-existing image to generate new images. In this article I aim to dive into this feature by starting with a photo of dunes that I took a while back. I will use descriptions based on sci-fi worlds with dunes, notably Arrakis from Dune and Tatooine from Star Wars, to create images from the original photo that look like as if they were from these worlds.

The original photo itself has been taken in the sand dunes of Soester Duinen in the Netherlands. The sand here has been deposited during the last ice age which came to lay bare due to intensive grazing during the middle ages. Almost all such areas in the Netherlands have been reclaimed from the sand, with the remaining dunes of the Soester Duinen now being maintained as geographical monuments.

Given this image, with the help of Stable Diffusion, I had the goal to transform it to sand dunes that one could find in a sci-fi setting.

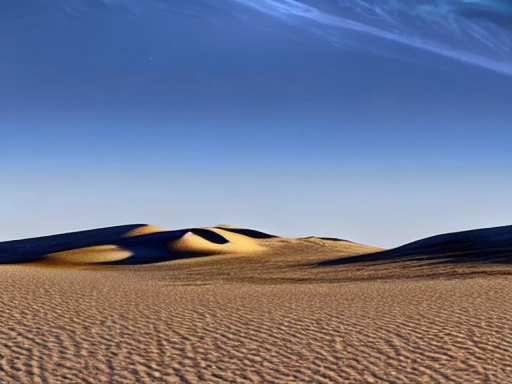

First I tried to get an image that looks more like the planet Arrakis, also known as Dune. One of the characteristics of this planet is that it is incredibly arid. This means that in order to get something that resembles Arrakis, with less vegetation and clouds, I need to provide a text prompt that emphasizes the harsh arid nature of Arrakis.

I have used the prompt "The planet Arrakis also known as Dune, arid, desert dunes, desolate, cloudless blue sky" to transform the image to the one that follows:

The Stable Diffusion model takes a number of parameters aside from the text prompt to generate images. One that is specific to using a starting image is called Img2Img strength, which defines how much weight should be given to the initial image when generating new images. Lower scores make the generated image look closer to the initial image, while higher scores let the model dream up new images more freely. For the images generated the value 0.75 was used (unless noted otherwise). One can quite well see the effect of the base image in the layout of the new one that is made to look like Arrakis e.g.: like how the sand dunes have replaced the vegetation in the background.

It can generally take some trial and error to find the right parameters to generate images that fit the creators vision. Nonetheless it is quite amazing how many good looking images can be generated just by playing around with the parameter and prompt selection.

While for Arrakis it took quite a long text prompt get close to the results that I wanted it is quite different when trying a Star wars related prompt. The prompt: "Tatooine, from Star Wars" is itself enough to help generate the following image from our original dune photo:

As one can see even from a short prompt, it is possible for the model to generate images that look like they were taken as photographs from the planet Tatooine from Star Wars. It seems the system has a notion of how the architecture of buildings look like on the planet in this setting and is capable of generating images of such buildings into the photograph.

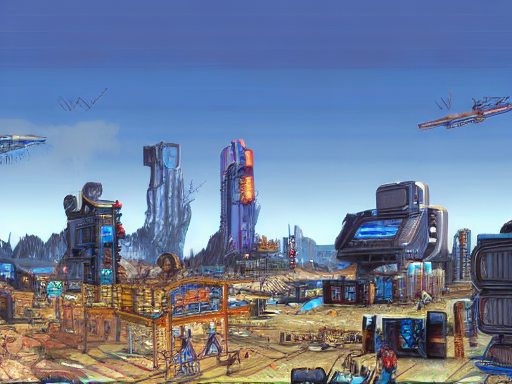

It is also possible to go beyond the well-known fictional planets and let the model dream up a setting.

The prompt "Cyberpunk settlement, detailed" gave the following results:

I hope I have given at least a small preview or what is possible with Stable Diffusion's img2img generation. It is quite a fun project to take photographs and modify them using text prompts to all kinds of imaginary settings. Images of the next sci-fi setting set in the dunes could be just one prompt and one photograph away.